Data poisoning has become the foundation of modern digital systems, powering analytics platforms, machine learning models, and AI-driven decision-making across industries. As organizations increasingly depend on large volumes of data to train models and automate critical processes, attackers have shifted their focus from traditional infrastructure attacks to the data itself.

Data poisoning attacks are subtle and difficult to detect. They can silently degrade model performance, introduce bias, manipulate outcomes, or embed hidden backdoors that activate under specific conditions. The impact of poisoned data can range from flawed business insights to severe security failures, especially in AI-driven environments such as fraud detection, cybersecurity, healthcare, and autonomous systems.

Rising Dependence on Data-Driven and AI Systems

Data-driven technologies and AI systems have become integral to how modern organizations operate and compete. Businesses now rely on machine learning models and analytics platforms to detect fraud, secure networks, personalize customer experiences, optimize supply chains, and support strategic decision-making.

This growing dependence on data has fundamentally changed the threat landscape. The accuracy, reliability, and trustworthiness of AI systems are directly tied to the quality of the data they consume. SecureLayer7 notes that growing AI reliance expands attack surfaces, enabling greater data manipulation and outcome influence

Why Data Poisoning has Become a Serious Cybersecurity and AI Risk

Data poisoning has emerged as a serious risk because it targets the foundation of AI systems rather than exploiting traditional software vulnerabilities. Instead of attacking applications or infrastructure, adversaries manipulate data during collection, labelling, or training phases to influence how models learn and behave.

The widespread use of automated data ingestion, third-party datasets, open data sources, and continuous model retraining has further increased exposure to data poisoning attacks. In AI-driven environments, poisoned data can introduce hidden biases, degrade model accuracy, or implant backdoors that activate only under specific conditions.

High-Level Impact on Machine Learning, Analytics, and Business Decisions

The impact of data poisoning extends beyond technical model failures. In machine learning systems, poisoned data can lead to incorrect predictions, biased outcomes, or unpredictable behavior that undermines trust in AI-driven decisions. In analytics platforms, it can distort insights, skew performance metrics, and misguide leadership decisions based on flawed intelligence.

For businesses, these effects translate into tangible consequences, including financial losses, regulatory violations, operational disruptions, and reputational damage. In high-stakes environments, such as cybersecurity monitoring, fraud prevention, healthcare diagnostics, and autonomous decision systems – data poisoning can have serious real-world implications.

Understanding Data Poisoning

Data poisoning is a growing threat that targets the integrity of datasets used for analytics, machine learning, and automated decision-making. Unlike traditional cyberattacks that exploit software vulnerabilities, data poisoning attacks the foundation of trust by manipulating the data systems depend on.

Understanding how data poisoning works, how it differs from routine data quality issues, and why data integrity is essential is key to building secure and reliable AI-driven environments.

How Malicious Data Manipulation Differs from Natural Data Quality Issues

It is important to distinguish malicious data poisoning from common data quality problems. Natural data quality issues – such as missing values, duplicate records, noisy data, or labelling inconsistencies are typically unintentional and arise from process gaps, system limitations, or human error.

Data poisoning, however, is deliberate and adversarial. Attackers intentionally craft or inject data to achieve specific outcomes, such as bypassing security controls, skewing analytics results, or embedding hidden backdoors in AI models.

Why Data Integrity is Foundational to Secure Decision-Making

Data integrity is foundational to secure decision-making because modern organizations increasingly rely on analytics and AI systems to guide critical actions. From cybersecurity response and fraud prevention to financial forecasting and operational optimization, decisions are only as reliable as the data behind them.

In AI-driven environments, the consequences of compromised data integrity are amplified. Poisoned data can influence model behavior long after the initial attack, eroding trust in automated systems and increasing exposure to security, regulatory, and reputational risks.

How AI Data Poisoning Targets Machine Learning Training Pipelines

AI data poisoning primarily targets the training pipeline, where models learn patterns and behaviors from historical data. Attackers exploit weaknesses in data collection, labelling, ingestion, or preprocessing stages to inject malicious samples.

In real-world environments, training pipelines are often automated and continuously retrained to adapt to evolving conditions. This improves model responsiveness, it also expands the attack surface. Poisoned data introduced at any stage of the pipeline can propagate through retraining cycles, reinforcing incorrect patterns over time.

Training-Time vs Inference-Time Poisoning

AI data poisoning attacks can occur at different stages of the machine learning lifecycle, most commonly during training-time or inference-time. Training-time poisoning focuses on corrupting the datasets used to build or update the model. These attacks are often persistent, as poisoned data becomes embedded in the model’s learned behavior and continues to influence outcomes long after the initial compromise.

Inference-time poisoning, by contrast, targets the data fed into a deployed model during prediction or evaluation. While often associated with adversarial attacks, inference-time poisoning can also involve sustained manipulation of input data streams, such as sensor data or user-generated content, to influence decisions without altering the underlying model.

Why AI Systems Are Uniquely Vulnerable to Poisoned Datasets

AI systems are uniquely vulnerable to poisoned datasets because they rely on statistical learning rather than explicit rules. Machine learning models do not inherently understand whether data is malicious; they learn whatever patterns are present in the data they are given.

Many AI systems operate as black boxes, making it difficult to trace specific outputs back to individual data points. This lack of transparency complicates detection and root cause analysis when poisoned data affects outcomes. Combined with large-scale data ingestion, third-party data dependencies, and continuous learning, these factors make AI data poisoning a stealthy and high-impact threat.

How Data Poisoning Attacks Work

Data poisoning attacks are designed to exploit trust in data rather than weaknesses in code or infrastructure. By targeting the points where data is collected, processed, and reused, attackers can quietly influence AI models and analytics systems over time.

Following attacks are particularly dangerous because poisoned data often appears legitimate, allowing it to move through systems without triggering traditional security controls.

Common Entry Points for Injecting Malicious Data

Attackers typically introduce poisoned data at stages where data enters the system or is aggregated from multiple sources. Common entry points include user-generated content, application logs, telemetry feeds, third-party datasets, and data labelling workflows.

In environments that rely on automated ingestion or continuous data collection, even brief access to these entry points can be enough to insert malicious data. Limited validation, weak access controls, and lack of data provenance tracking make it easier for poisoned data to blend in with legitimate records and persist across datasets.

Manipulation of Labels, Features, or Training Samples

Manipulation of labels, features, or training samples represents core tactics in data poisoning attacks, subtly corrupting AI models to produce flawed outputs.

- Label Manipulation: Attackers flip labels on training samples, such as changing benign to malicious in cybersecurity datasets, distorting decision boundaries without altering features.

- Feature Manipulation: Feature manipulation, often via clean-label attacks, perturbs input data while keeping original labels intact, making poisons hard to detect visually.

- Training Sample Manipulation: Directly injecting or altering samples, combining label and feature changes – amplifies poisoning impact, such as embedding outliers that propagate biases across analytics.

How Poisoned Data Propagates Through AI and Analytics Systems

Poisoned data rarely remains confined to a single model or dataset. In AI systems, compromised samples become part of training datasets and are reinforced through retraining cycles, embedding malicious patterns into model behavior. In analytics platforms, poisoned data can distort metrics, dashboards, and reports, leading to flawed insights that influence business decisions.

Because organizations often share data across teams and reuse datasets for multiple purposes, poisoned data can spread laterally, amplifying its impact and making remediation more complex. Over time, this propagation undermines trust in both AI outputs and data-driven decision-making across the organization.

Common Types of Data Poisoning Attacks

Data poisoning attacks take many forms, each designed to manipulate how machine learning models learn from data and behave in production. These attacks vary in sophistication – from simple label manipulation to stealthy backdoors and supply chain compromises – but share a common goal: corrupting model outcomes without triggering immediate detection.

Following are the most common and impactful types of data poisoning attacks observed in real-world AI systems.

Label-Flipping and Mislabelling Attacks

Label-flipping and mislabelling attacks manipulate the ground-truth labels in training data to mislead machine learning models. By intentionally changing correct labels – such as marking malicious activity as safe – attackers distort learning patterns and weaken model accuracy, even when only a small portion of the dataset is affected.

The consequences include biased predictions, missed threats, and unreliable AI decisions in areas like cybersecurity, fraud detection, and healthcare. Because mislabelled data often appears valid during training and testing, label-flipping attacks are hard to detect, making continuous label validation, auditing, and strong data governance critical for prevention.

Backdoor and Trigger-Based Poisoning

Backdoor poisoning attacks embed hidden patterns – known as triggers – into a subset of training data. The model behaves normally under standard conditions but produces attacker-controlled outputs when the trigger is present in input data.

Triggers can be visual patterns, keywords, or specific data sequences that are imperceptible during normal validation. These attacks are particularly dangerous because they evade standard accuracy checks and only activate in targeted scenarios, making them ideal for stealthy exploitation in autonomous systems, biometric models, or AI-driven security controls.

Clean-Label Data Poisoning

Clean-label data poisoning is a stealthy attack technique where malicious training data is injected without altering labels or introducing obvious anomalies. The poisoned samples appear legitimate and pass validation checks, yet are carefully crafted to subtly shift a model’s decision boundaries.

Because clean-label poisoning blends seamlessly into trusted datasets, it is difficult to detect using standard quality or labeling checks. These attacks pose a serious risk to high-value AI systems in areas such as healthcare, finance, and biometric authentication.

Data Injection and Data Manipulation Attacks

In data injection attacks, adversaries introduce malicious data directly into the training pipeline – often through public data sources, user-generated content, or unsecured data ingestion mechanisms. Data manipulation attacks, on the other hand, involve altering existing datasets by modifying features, distributions, or values.

These attacks exploit weak data governance and inadequate validation controls. Over time, poisoned data skews model behavior, leading to unreliable predictions, biased outcomes, or unsafe automated decisions, especially in continuously learning or retraining systems.

Supply Chain-Based Data Poisoning

Supply chain data poisoning targets third-party datasets, pre-trained models, or external data providers used during model development. Instead of attacking the model directly, adversaries compromise upstream data sources that organizations inherently trust.

This attack vector is particularly concerning in modern AI ecosystems that rely heavily on open-source datasets, shared model repositories, and outsourced data pipelines. Once poisoned components are integrated, the resulting vulnerabilities propagate across multiple systems, amplifying risk at scale.

Real-World Impact of Data Poisoning Attacks

Data poisoning attacks have real-world consequences that extend far beyond technical model failures. By corrupting the data that AI systems rely on, these attacks can silently degrade decision quality, introduce bias, and trigger unsafe or insecure behaviors in production environments.

Degraded Model Performance and Biased Outcomes

Data poisoning directly undermines the accuracy and reliability of machine learning models, leading to degraded performance over time. Poisoned training data distorts learned patterns, causing increased error rates, unstable predictions, and inconsistent results across similar inputs.

Beyond accuracy loss, poisoned data can introduce or amplify bias in AI systems. Skewed or manipulated datasets may disproportionately affect specific user groups, regions, or behaviors, resulting in unfair or discriminatory outcomes. In domains such as fraud detection, healthcare, hiring, and financial services, these biased decisions can cause real-world harm, regulatory exposure, and long-term erosion of trust in AI-driven systems.

Security Risks from Manipulated AI Behavior

Data poisoning can cause AI systems to behave in unpredictable and insecure ways, creating critical security risks across automated decision-making environments. Manipulated models may misclassify threats, bypass detection mechanisms, or fail to respond to malicious activity under specific conditions.

Poisoned AI models may contain hidden backdoors that activate only when a specific trigger is present, allowing attackers to control outcomes without raising alarms. Because the model appears to function normally during routine testing, these security weaknesses often remain undetected until exploited in production.

Business, Reputational, and Compliance Risks

Business, reputational, and compliance impacts are critical considerations for any organization, as they directly influence long-term success and stakeholder trust.

Data Poisoning vs Other AI Attacks

AI systems face a growing range of security threats across data collection, model training, and deployment. While many AI attacks focus on exploiting models at runtime, data poisoning operates earlier in the lifecycle by manipulating the data that shapes how models learn.

Data Poisoning vs Adversarial Attacks

Data poisoning attacks occur during the training phase, where attackers manipulate datasets to influence how a model learns. The impact is long-term: once poisoned data is absorbed, the model may consistently produce incorrect or biased outputs – even after deployment.

Adversarial attacks target models at runtime by crafting inputs designed to mislead predictions. These attacks do not alter the model itself; they exploit weaknesses in decision boundaries during inference.

Data Poisoning vs Model Inversion and Extraction

Data poisoning attacks and model exploitation attacks target AI systems in fundamentally different ways. Data poisoning focuses on corrupting the data used to train models, influencing how they learn and behave over time.

Model inversion and model extraction attacks aim to infer sensitive training data or replicate a model’s functionality by analyzing its outputs. Inversion and extraction primarily threaten data privacy and intellectual property, data poisoning undermines the integrity and trustworthiness of the AI system itself – making its impact broader, harder to detect.

Why Data Poisoning Is Harder to Detect Than Runtime Attacks

Data poisoning attacks exploit trust in data pipelines rather than weaknesses in deployed systems. Poisoned samples often look statistically valid, carry correct labels, and pass quality checks – especially in clean-label or supply chain-based attacks.

Because the damage occurs before deployment, traditional runtime security controls, monitoring tools, and anomaly detection systems offer limited protection. By the time issues surface – such as model drift, unexplained bias, or silent failures the root cause may already be deeply embedded in historical training data.

How to Detect Data Poisoning

Detecting data poisoning requires a proactive and systematic approach across the AI lifecycle. Unlike conventional attacks that trigger immediate alerts, data poisoning operates quietly by blending malicious samples into legitimate datasets, making early detection difficult. Subtle changes in data patterns, model behavior, or performance trends are often the only indicators.

Statistical Anomalies and Data Drift Indicators

Statistical anomalies are often the earliest warning signs of data poisoning. Sudden shifts in feature distributions, unexpected changes in class balance, or abnormal correlations between variables may indicate that malicious or manipulated data has entered the training pipeline.

Data drift indicators provide deeper insight into how data behavior evolves over time. Covariate drift occurs when input data distributions change, while concept drift reflects changes in the relationship between inputs and outputs.

Model Performance Degradation Signals

Model performance degradation is a key indicator of potential data poisoning. When poisoned data influences training, models may exhibit a gradual decline in accuracy, precision, or recall, even though the underlying architecture and code remain unchanged.

Another common signal is an increase in false positives or false negatives, unstable predictions, or inconsistent behavior across similar inputs. These patterns suggest that the model’s learned decision boundaries have been distorted. Continuous performance monitoring, granular metric analysis, and comparison against historical baselines are essential to detect these subtle but impactful signs of data poisoning early.

Dataset Validation and Integrity Checks

Strong dataset validation mechanisms act as a frontline defense against poisoning. This includes verifying data provenance, checking label consistency, detecting duplicate or near-duplicate samples, and validating schema and feature ranges.

Integrity checks such as cryptographic hashing, access controls, and versioned datasets help ensure that training data has not been tampered with. When combined with human review for high-risk datasets, these measures reduce the likelihood of poisoned data silently entering the pipeline.

Role of Monitoring and Continuous Evaluation

Monitoring and continuous evaluation are critical for identifying data poisoning that may bypass initial validation checks. Because poisoned data can enter systems gradually or through retraining cycles, one-time reviews are insufficient to maintain model integrity.

Continuous evaluation enables teams to track data distributions, model performance trends, and decision consistency over time. By comparing current behavior against trusted baselines, organizations can identify deviations caused by poisoned data, trigger alerts, and initiate controlled retraining or rollback actions.

Preventing Data Poisoning and AI Data Poisoning

Preventing data poisoning and AI data poisoning requires safeguarding AI systems at their most vulnerable point – data. Because poisoned data can silently compromise models long before deployment, effective prevention goes beyond traditional security controls. It demands strong data governance, secure pipelines, and resilient training practices that protect the entire AI lifecycle from intentional manipulation and long-term risk.

Securing Data Collection and Ingestion Pipelines

The first line of defense against data poisoning is a secure data ingestion pipeline. All data sources – internal systems, user-generated inputs, APIs, and third-party feeds – should be authenticated, validated, and rate-limited.

Input sanitization, schema validation, and automated quality checks help prevent malicious or malformed data from entering training workflows. For high-risk or external sources, staged ingestion reduces the likelihood of poisoned data being introduced at scale.

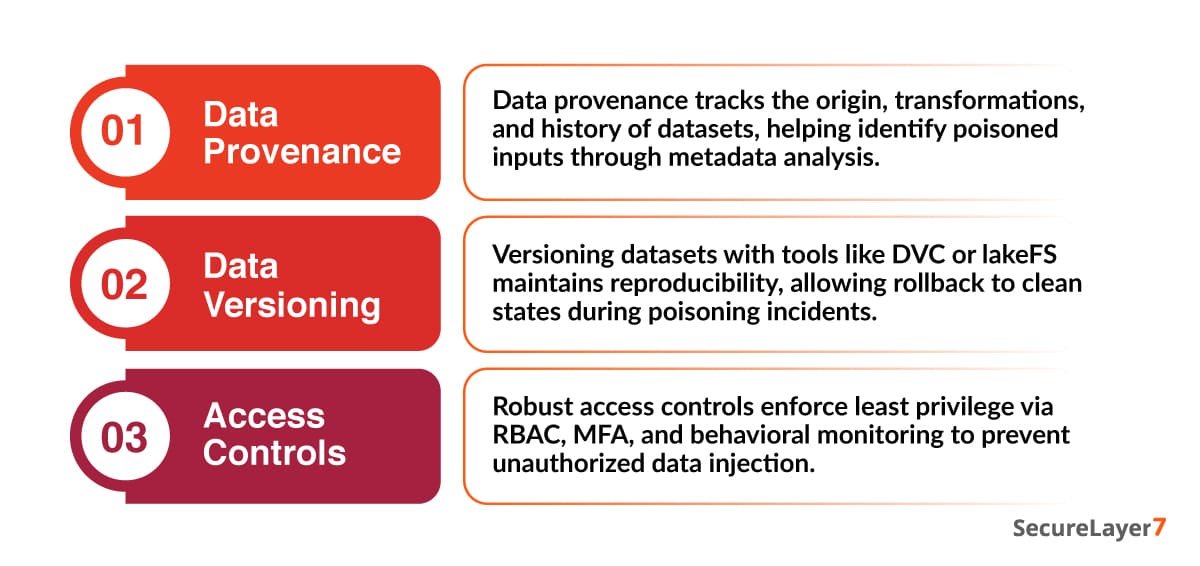

Data Provenance, Versioning, and Access Controls

Data provenance, versioning, and access controls form essential defenses against data poisoning in AI systems by ensuring data integrity from source to deployment. Following practices enable traceability, reproducibility, and restricted exposure in machine learning pipelines.

Robust Training Techniques and Anomaly-Resistant Models

Building resilience into the training process itself helps mitigate the impact of poisoned samples. Robust training techniques – such as outlier-resistant loss functions, data sanitization before training, and ensemble-based learning, reduce sensitivity to malicious inputs.

Techniques like differential training, influence analysis, and sample weighting can limit how much any single data point affects model behavior. These approaches make targeted poisoning attacks more difficult and less effective, even if some malicious data slips through controls.

Conclusion

Data poisoning is a rapidly growing threat that targets the very foundation of AI-driven systems data integrity. By silently influencing how models learn, these attacks can degrade performance, introduce bias, and create hidden security risks that persist long after deployment. As reliance on AI increases, the impact of poisoned data extends beyond technical failures to serious business, reputational, and compliance consequences.

Proactive detection, prevention, and secure data practices are essential to building resilient and trustworthy AI. Organizations must embed data poisoning defenses across the ML lifecycle through strong governance, continuous monitoring, and validated data pipelines.

SecureLayer7 helps enterprises protect AI systems by securing data at every stage – because trusted AI starts with trusted data.

Frequently Asked Questions (FAQs)

Data poisoning is a cyberattack technique in which attackers deliberately manipulate, inject, or alter data used to train analytics systems or machine learning models. By compromising data integrity, attackers influence how systems learn and behave, leading to inaccurate predictions, biased decisions, or silent system failures.

AI data poisoning specifically targets machine learning and artificial intelligence systems during their training or retraining phases. Attackers introduce malicious or misleading data that causes models to learn incorrect patterns or behave in attacker-controlled ways.

Data poisoning attacks exploit weaknesses in data collection, labelling, or ingestion pipelines. Attackers may inject malicious samples, manipulate labels, alter feature values, or compromise third-party data sources.

Common data poisoning techniques include label-flipping and mislabeling, backdoor or trigger-based poisoning, clean-label poisoning, data injection and manipulation, and supply chain-based data poisoning.

Data poisoning is dangerous because it undermines AI systems at their foundation – data integrity. Poisoned models can make biased, insecure, or unsafe decisions while appearing to function normally.